By Scott Sung

The recently released 2025 Misinformation Survey paints a clear picture: misinformation in Taiwan is no longer an occasional phenomenon tied to political manipulation, but has become a persistent feature of the information environment.

An overwhelming 96.52% of respondents reported having encountered false information, while 96.81% believe it has already had a serious impact on society.

The survey report was funded by the Taiwan Social Resilience Research Center at National Taiwan University (NTU) and conducted by Professor Chen-Ling Hung and Associate Professor Jerry Ji-Lung Hsieh of NTU, together with researcher Greg Chih-Hsin Sheen from Academia Sinica’s Institute of Political Science. The findings were released last Thursday (April 30).

Against this backdrop, public expectations for addressing misinformation are notably high. More than 90% of respondents support government legislation to regulate misinformation, while 76.6% believe that technology companies should take a more active role in limiting its spread—even if this may affect freedom of expression. In addition, 90.7% support greater transparency in platform algorithms, and a majority believe platforms should bear more responsibility for the quality of information circulating online.

At the same time, the nature of misinformation itself is evolving.Beyond the rise of generative AI, scams have emerged as a major force shaping this landscape. The survey shows that 75% of respondents have received suspicious investment-related messages, and more than 90% believe that scam groups are now the primary producers of misinformation.

These findings align with global concerns. In the World Economic Forum’s Global Risks Report 2026, misinformation ranks as the second most significant short-term global risk, highlighting how declining information integrity and the rapid development of AI technologies are accelerating the spread of false and misleading content.

Truth Matters: The 2024 survey on what Taiwanese think about misinformation and fact-checking

From Political Manipulation to Financial Scams

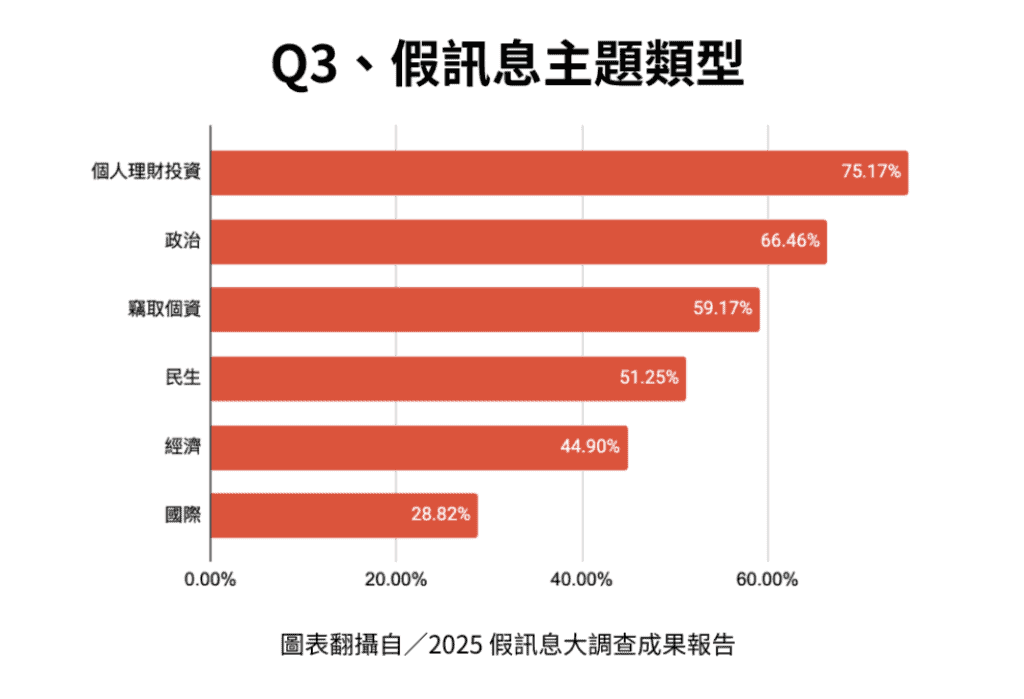

One of the most notable shifts identified in the survey is the transformation of misinformation sources and themes. While previous research often focused on politically driven misinformation, the 2025 survey shows that scam-related content—especially investment fraud and personal data theft—has overtaken political narratives as the most common type encountered by the public.

Survey results indicate that investment-related scams rank as the most frequently encountered form of misinformation, followed by political content, while messages involving personal data theft also rank highly.

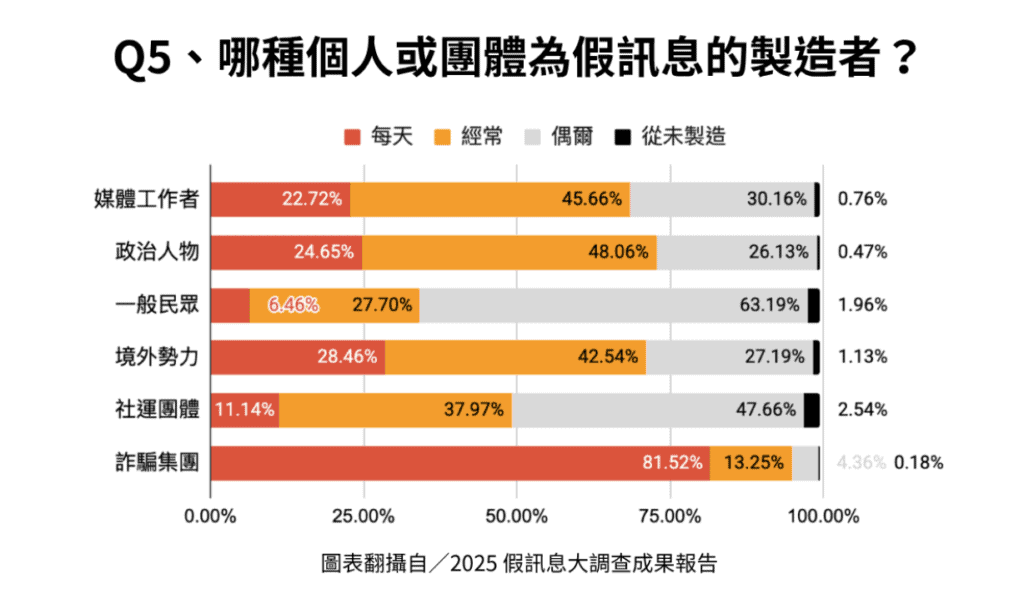

This shift is also reflected in how respondents perceive the sources of misinformation: as many as 94.77% believe that scam groups “frequently or daily” produce false information, significantly surpassing foreign actors (71%), politicians (72.71%), and media professionals.

Confidence and Blind Spots in the Age of AI

Despite the increasing complexity of misinformation, many people still rely primarily on personal judgment when evaluating information. The survey shows that a majority of respondents (63.85%) tend to assess the credibility of information based on their own knowledge and experience, rather than systematic verification methods such as checking sources or consulting reliable references.

At the same time, over 70% of respondents expressed confidence in their ability to identify AI-generated false content—revealing a striking sense of self-assurance that may not always match reality.

Experts caution that this reliance on intuition may create significant blind spots. Even professionals working in anti-fraud efforts acknowledge that rapidly evolving AI scams can be difficult to identify in real time. In some cases, distinguishing between genuine and manipulated content requires repeated exposure or even falling victim to a scam before patterns become clear.

A particularly concerning trend is the rise of so-called “AI doctors.” These may either be entirely fictional figures or real medical professionals whose likeness and voice have been manipulated.

Initially, such content may spread harmless-looking health tips or promote supplements, but in certain contexts—such as elections—it can quickly shift toward disseminating politically motivated narratives. As Zhao-Hui Huang, the board member of Taiwan FactCheck Center noted, these developments “not only scam people out of their money, but may also manipulate their thinking.”

Closed Messaging Spaces and the Importance of Moderation

The survey also explores the dynamics of misinformation within closed messaging platforms such as LINE, which are widely used in Taiwan. Because these spaces are not publicly visible, they have traditionally been difficult to study.

This year, researchers introduced a conjoint analysis experiment, categorizing LINE groups based on six factors—including membership, discussion topics, political orientation, information sources, moderation mechanisms, and group size—to assess which types of groups are perceived as most vulnerable to misinformation.

The findings highlight a clear conclusion: moderation is the single most important factor in preventing the spread of misinformation. Groups that are highly active but lack oversight, or where most members remain passive, are seen as particularly susceptible. In contrast, groups with active administrators or mechanisms for fact-checking create a sense of accountability, reducing the likelihood that false information will spread and increasing trust among members.

At the same time, group composition also matters. Respondents tend to view groups focused on investment or political topics as especially prone to misinformation. Groups composed of strangers with shared interests are also perceived as less trustworthy, suggesting that anonymity plays a significant role in shaping perceptions of credibility.

Search Engines as a Key Gateway for Misinformation

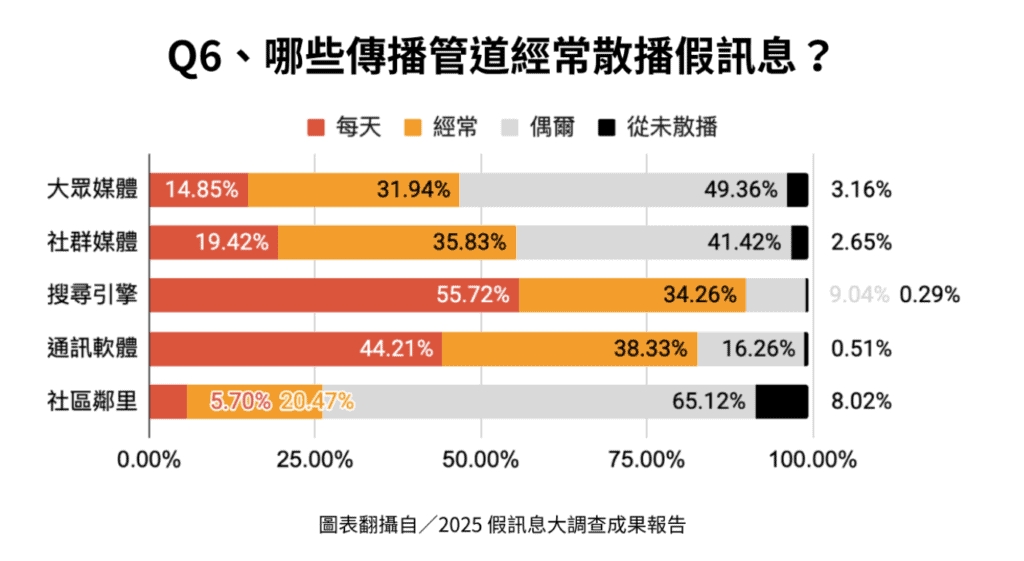

Another important finding concerns how people encounter misinformation. Nearly 90% of respondents believe that search engines frequently expose users to false information, making them the most significant channel—followed by messaging apps and social media platforms.

This suggests that misinformation risks are not limited to social media alone, but are structurally embedded in the broader information ecosystem. From search results to recommendation systems, the mechanisms that guide users toward information may also amplify exposure to misleading or false content.

Growing Demand for Regulation and Platform Responsibility

Public expectations for addressing misinformation are high. More than 90% of respondents support government legislation to regulate misinformation, while 76.6% believe that technology companies should restrict its spread—even if doing so may affect freedom of expression.

In addition, 90.7% support policies requiring greater transparency in platform algorithms, and a majority believe that platforms should take greater responsibility for ensuring the quality of information circulating online.

These findings reflect a growing consensus that combating misinformation is not solely an individual responsibility, but a shared obligation among governments, platforms, and society at large.

Rising Awareness and Trust in Fact-Checking

Amid these challenges, the survey offers encouraging signs. Public awareness, usage, and trust in fact-checking organizations have all increased significantly. Approximately 79.23% of respondents are now aware of fact-checking organizations—up sharply from 58.6% in 2023—while 67.49% report having used fact-checking services. Nearly 70% express positive views of these organizations.

Although fact-checking in Taiwan often faces accusations of political bias, the data suggests that public trust remains strong. This indicates that fact-checking continues to play a crucial role in supporting information integrity and public understanding.

Methodology

The survey was conducted through the National Taiwan University websurvey. It targeted residents across Taiwan aged 20 and above, with data collected between October 25 and 30, 2025. The research team conducted three rounds of sampling from the platform’s member database, resulting in a total of 2,755 valid responses. At a 95% confidence level, the margin of error is ±1.87 percentage points.

*Editor’s Note: This article was originally published in Chinese and translated with the help of AI and has been carefully reviewed and edited for an international audience.